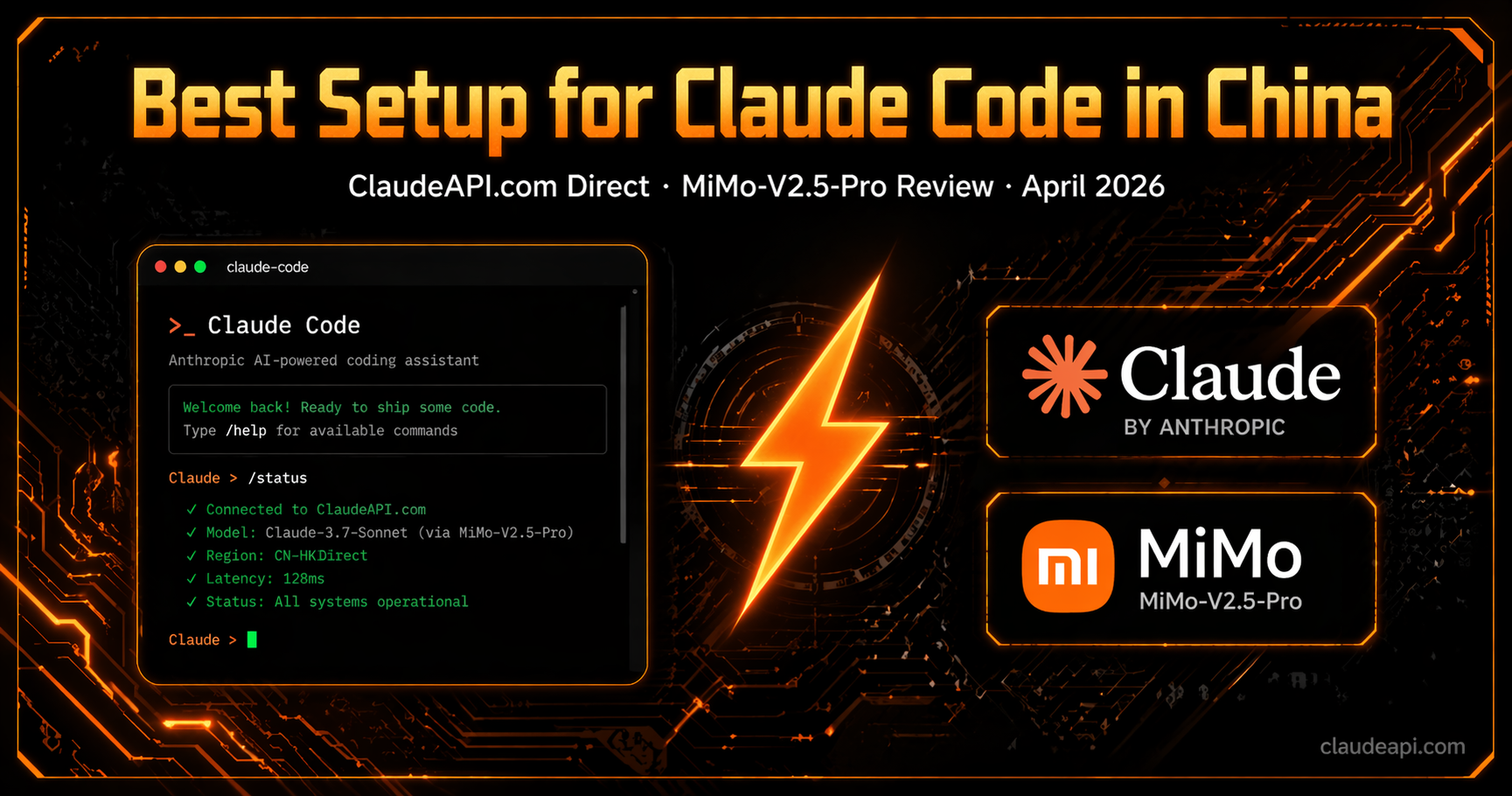

I Tested Every New AI Model in Claude Code This Month. Here’s What Actually Works.

Claude Code + ClaudeAPI.com is still the fastest path to reliable Claude Opus 4.6 access — unified API, pay-as-you-go, low latency. MiMo-V2.5-Pro is a solid budget fallback, but it doesn’t replace Claude.

April 2026 Was Insane

Five major model drops in two weeks:

- Claude Opus 4.7 — Anthropic’s latest flagship

- Kimi K2.6 — Moonshot AI’s new release

- MiMo-V2.5-Pro — Xiaomi stealth-launched an API with zero fanfare

- GPT-5.5 — OpenAI reclaimed the top spot on every benchmark

- DeepSeek-V4 preview — open-source, million-token context

Any one of these deserves its own deep dive.

But if you use Claude Code daily, you already know the real question isn’t “which model is best.” It’s:

How do I get stable, low-latency Claude access — and what’s my fallback when I need to cut costs?

Short answer: Claude Code + ClaudeAPI.com remains the best primary setup. For budget runs, MiMo-V2.5-Pro is the closest thing to a real Claude Code experience at a fraction of the price.

Model-by-Model Breakdown: What Matters for Claude Code

DeepSeek-V4: Skip It (For Now)

DeepSeek-V4 preview launched April 24 with open-source weights. On paper, it looks great:

- Million-token context window

- Claims best-in-class agent capabilities among open-source models

- API already live — set

model_nametodeepseek-v4-proordeepseek-v4-flash

Our take: not ready for Claude Code.

Benchmarks don’t capture what Claude Code actually demands — sustained multi-turn tool calls, precise instruction following across long agent loops. DeepSeek-V4 falls short here. It doesn’t match GLM-5.1 or MiMo-V2.5-Pro in real agentic workflows.

Wait for the stable release.

GPT-5.5: Best Model on Paper, 2× the Price

GPT-5.5 just launched and tops Claude Opus 4.7 on nearly every benchmark — coding, agent tasks, document processing. Its frontend capabilities have improved too: when extending a project with an existing design system, GPT-5.5 does a better job respecting the visual language already in place.

But the pricing:

| Input | Output | |

|---|---|---|

| GPT-5.5 | $5/M tokens | $30/M tokens |

| GPT-5.4 | $2.50/M tokens | $15/M tokens |

| Claude Opus 4.7 | ~$5/M tokens | ~$25/M tokens |

That’s double GPT-5.4 and 20% more than Opus on output. Context in Codex is capped at 400k for now (1M coming with full API rollout).

Strong model. Expensive model. The cost compounds fast in heavy Claude Code-style workflows.

MiMo-V2.5-Pro: The Budget Pick That Actually Works

Xiaomi dropped MiMo-V2.5-Pro with no announcement — API included.

Specs at a glance:

- Context window: 1M tokens

- Pricing (0–256k): ~$1 / $3 per million tokens (input/output)

- Pricing (256k–1M): ~$2 / $6 per million tokens (input/output)

Compared to Claude Opus 4.6 ($5 / $25 per million tokens), MiMo comes in roughly 60% cheaper. It just launched, adoption is still low, so API latency is excellent right now.

Real-World Testing: 3 Full Projects

We ran three complete projects through Claude Code with MiMo-V2.5-Pro as the backend:

Project 1: Data Analytics Platform (Lark/Feishu Integration)

After describing requirements conversationally, MiMo produced a complete tech stack selection, architecture design, and metrics framework. Final output: a working visualization and analytics site connected to a Lark database — per-article completion rates, share rate analysis, auto-generated diagnostic reports.

Project 2: Custom Server Skill Deployment

OAuth authentication, enterprise identity verification, container isolation, SSO integration. MiMo-V2.5-Pro completed it in one pass with zero corrections. For reference, several other models we tested previously failed outright at the deployment step.

Project 3: Million-Word Document Analysis

We fed it a 1.6M-word novel and asked for character relationship graphs, plot timelines, and character profiles — output as an interactive webpage. It used the full 1M context window and produced complete, logically coherent output.

Token breakdown:

- Input: 1.2M

- Output: 157.1K

- Cache hits: 29.6M (cache is temporarily free)

- Actual cost: far below expectations

The One Weakness

Frontend aesthetics. UI generated from scratch is functional but generic. Pair it with a frontend design skill and the gap closes. Core logic and code quality are solid — this is a minor trade-off.

Our April 2026 Recommendations

| Priority | Setup | Best For |

|---|---|---|

| 🥇 Primary | Claude Code + Claude Opus 4.6 via ClaudeAPI.com | All scenarios. Direct access, unified API, latency < 200ms, flexible payment |

| 🥈 Budget Alt A | Claude Code + MiMo-V2.5-Pro | Tight budget, fast fallback. Stable tool calling, currently very fast |

| 🥉 Budget Alt B | Claude Code + GLM-5.1 | Strong all-around, but Coding Plan slots are limited right now |

| ❌ Not Recommended | DeepSeek-V4 | Poor real-world Claude Code performance. Wait for stable release |

Pro tip: When GLM-5.1’s Coding Plan is full, MiMo-V2.5-Pro is your best bridge. Low traffic means fast responses — but that window won’t last.

Why We Use ClaudeAPI.com as Our Primary Access Point

Using Claude Code effectively comes down to two things: reliable API access and predictable costs.

ClaudeAPI.com is a unified API proxy that’s fully compatible with the Anthropic API format. You point your ANTHROPIC_BASE_URL at it, and everything works — Claude Code, any OpenAI-format client, custom scripts.

How It Compares

| api.anthropic.com (Direct) | ClaudeAPI.com | |

|---|---|---|

| Global access | Depends on your region and network | Multi-node infrastructure, optimized routing |

| Average latency | Varies | < 200ms |

| Claude Code compatibility | Native | 100% compatible (same API format) |

| Payment | Credit card only | Credit card, Alipay, WeChat, crypto, and more |

| Uptime | Depends on network conditions | 99.8% |

When to Use Claude vs. MiMo

MiMo is cheaper. But Claude’s advantages in creative reasoning, complex multi-file code generation, and long-document comprehension remain unmatched by any alternative model today.

Use Claude when quality matters. Fall back to MiMo when budget matters.

TL;DR

April 2026 — models are shipping faster than ever, but the optimal Claude Code stack is clear:

-

Use Claude whenever possible. ClaudeAPI.com gives you reliable access with a unified API, sub-200ms latency, and flexible payment. Setup takes three minutes.

-

Fall back to MiMo-V2.5-Pro when needed. Stable tool calling, million-token context, and currently fast due to low adoption.

-

Use cc-switch to manage both configs and swap between them instantly.

Related

- How to Set Up cc-switch for Claude Code (2026) →

- Claude API Pricing Breakdown: 2026 Rates →

- Claude API Error Handbook: Fixing 401 / 429 / 529 →

- Cherry Studio × Claude API: Integration Guide →